A terrifying AI-generated woman is lurking in the abyss of latent space

There's a ghost in the machine. Machine learning, that is.

We are all regularly amazed by AI's capabilities in writing and creation, but who knew it had such a capacity for instilling horror? A chilling discovery by an AI researcher finds that the "latent space" comprising a deep learning model's memory is haunted by least one horrifying figure — a bloody-faced woman now known as "Loab."

(Warning: Disturbing imagery ahead.)

But is this AI model truly haunted, or is Loab just a random confluence of images that happens to come up in various strange technical circumstances? Surely it must be the latter unless you believe spirits can inhabit data structures, but it's more than a simple creepy image — it's an indication that what passes for a brain in an AI is deeper and creepier than we might otherwise have imagined.

Loab was discovered — encountered? summoned? — by a musician and artist who goes by Supercomposite on Twitter (this article originally used her name but she said she preferred to use her handle for personal reasons, so it has been substituted throughout). She explained the Loab phenomenon in a thread that achieved a large amount of attention for a random creepy AI thing, something there is no shortage of on the platform, suggesting it struck a chord (minor key, no doubt).

Supercomposite was playing around with a custom AI text-to-image model, similar to but not DALL-E or Stable Diffusion, and specifically experimenting with "negative prompts."

Ordinarily, you give the model a prompt, and it works its way toward creating an image that matches it. If you have one prompt, that prompt has a "weight" of one, meaning that's the only thing the model is working toward.

You can also split prompts, saying things like "hot air balloon::0.5, thunderstorm::0.5" and it will work toward both of those things equally — this isn't really necessary, since the language part of the model would also accept "hot air balloon in a thunderstorm" and you might even get better results.

But the interesting thing is that you can also have negative prompts, which causes the model to work away from that concept as actively as it can.

Minus world

This process is far less predictable, because no one knows how the data is actually organized in what one might anthropomorphize as the "mind" or memory of the AI, known as latent space.

"The latent space is kind of like you’re exploring a map of different concepts in the AI. A prompt is like an arrow that tells you how far to walk in this concept map and in which direction," Supercomposite told me.

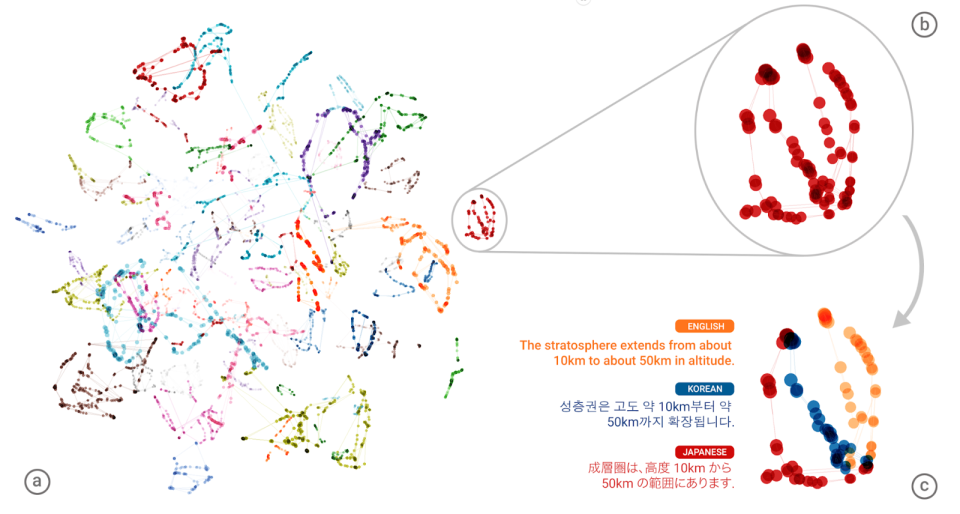

Here's a helpful rendering of a much, much simpler latent space in an old Google translation model working on a single sentence in multiple languages:

The latent space of a system like DALL-E is orders of magnitude larger and more complex, but you get the general idea. If each dot here was a million spaces like this one it's probably a bit more accurate. Image Credits: Google

"So if you prompt the AI for an image of 'a face,' you'll end up somewhere in the middle of the region that has all the of images of faces and get an image of a kind of unremarkable average face," she said. With a more specific prompt, you'll find yourself among the frowning faces, or faces in profile, and so on. "But with negatively weighted prompt, you do the opposite: You run as far away from that concept as possible."

But what's the opposite of "face"? Is it the feet? Is it the back of the head? Something faceless, like a pencil? While we can argue it amongst ourselves, in a machine learning model it was decided during the process of training, meaning however visual and linguistic concepts got encoded into its memory, they can be navigated consistently — even if they may be somewhat arbitrary.

Image Credits: Supercomposite

We saw a related concept in a recent AI phenomenon that went viral because one model seemed to reliably associate some nonsense words with birds and insects. But it wasn't that DALL-E had a "secret language" in which "Apoploe vesrreaitais" means birds — it's just that the nonsense prompt basically had it throwing a dart at a map of its mind and drawing whatever it lands nearby, in this case birds because the first word is kind of similar to some scientific names. So the arrow just pointed generally in that direction on the map.

Supercomposite was playing with this idea of navigating the latent space, having given the prompt of "Brando::-1," which would have the model produce whatever it thinks is the very opposite of "Brando." It produced a weird skyline logo with nonsense but somewhat readable text: "DIGITA PNTICS."

Weird, right? But again, the model's organization of concepts wouldn't necessarily make sense to us. Curious, Supercomposite wondered it she could reverse the process. So she put in the prompt: "DIGITA PNITICS skyline logo::-1." If this image was the opposite of "Brando," perhaps the reverse was true too and it would find its way to, perhaps, Marlon Brando?

Instead, she got this:

Image Credits: Supercomposite

Over and over she submitted this negative prompt, and over and over the model produced this woman, with bloody, cut or unhealthily red cheeks and a haunting, otherworldly look. Somehow, this woman — whom Supercomposite named "Loab" for the text that appears in the top-right image there — reliably is the AI model's best guess for the most distant possible concept from a logo featuring nonsense words.

What happened? Supercomposite explained how the model might think when given a negative prompt for a particular logo, continuing her metaphor from before.

"You start running as fast as you can away from the area with logos," she said. "You maybe end up in the area with realistic faces, since that is conceptually really far away from logos. You keep running, because you don’t actually care about faces, you just want to run as far away as possible from logos. So no matter what, you are going to end up at the edge of the map. And Loab is the last face you see before you fall off the edge."

Preternaturally persistent

Image Credits: Supercomposite

Negative prompts don't always produce horrors, let alone so reliably. Anyone who has played with these image models will tell you it can actually be quite difficult to get consistent results for even very straightforward prompts.

Put in one for "a robot standing in a field" four or 40 times and you may get as many different takes on the concept, some hardly recognizable as robots or fields. But Loab appears consistently with this specific negative prompt, to the point where it feels like an incantation out of an old urban legend.

You know the type: "Stand in a dark bathroom looking at the mirror and say 'Bloody Mary' three times." Or even earlier folk instructions of how to reach a witch's abode or the entrance to the underworld: Holding a sprig of holly, walk backward 100 steps from a dead tree with your eyes closed.

"DIGITA PNITICS skyline logo::-1" isn't quite as catchy, but as magic words go the phrase is at least suitably arcane. And it has the benefit of working. Only on this particular model, of course — every AI platform's latent space is different, though who knows if Loab may be lurking in DALL-E or Stable Diffusion too, waiting to be summoned.

Loab as an ancient statue, but it's unmistakably her. Image Credits: Supercomposite

In fact, the incantation is strong enough that Loab seems to infect even split prompts and combinations with other images.

"Some AIs can take other images as prompts; they basically can interpret the image, turning it into a directional arrow on the map just like they treat text prompts," explained Supercomposite. "I used Loab’s image and one or more other images together as a prompt … she almost always persists in the resulting picture."

Sometimes more complex or combination prompts treat one part as more of a loose suggestion. But ones that include Loab seem not just to veer toward the grotesque and horrifying, but to include her in a very recognizable fashion. Whether she's being combined with bees, video game characters, film styles or abstractions, Loab is front and center, dominating the composition with her damaged face, neutral expression and long dark hair.

It's unusual for any prompt or imagery to be so consistent — to haunt other prompts the way she does. Supercomposite speculated on why this might be.

"I guess because she is very far away from a lot of concepts and so it’s hard to get out of her little spooky area in latent space. The cultural question, of why the data put this woman way out there at the edge of the latent space, near gory horror imagery, is another thing to think about," she said.

Although it's an oversimplification, latent space really is like a map, and the prompts like directions for navigating it — and the system draws whatever ends up being around where it's asked to go, whether it's well-trodden ground like "still life by a Dutch master" or a synthesis of obscure or disconnected concepts: "robots battle aliens in a cubist etching by Dore." As you can see:

Image Credits: TechCrunch / DALL-E

A purely speculative explanation of why Loab exists has to do with how that map is laid out. As Supercomposite suggested, it's likely that, simply due to the fact that company logos and horrific, scary imagery are very far from one another conceptually.

A negative prompt doesn't mean "take 10 data steps in the other direction," it means keep going as far as you can, and it's more than possible that images at the farthest reaches of an AI's latent space have more extreme or uncommon values. Wouldn't you organize it that way, with stuff that has lots of commonalities or cross-references in the "center," however you define that — and weird, wild stuff that's rarely relevant out at the "edge"?

Therefore negative prompts may act like a way to explore the frontier of the AI's mind map, skimming the concepts it deems too outlandish to store among prosaic concepts like happy faces, beautiful landscapes or frolicking pets.

The dark forest of the AI subconscious

Image Credits: Devin Coldeway

The unnerving fact is no one really understands how latent spaces are structured or why. There is of course a great deal of research on the subject, and some indications that they are organized in some ways like how our own minds are — which makes sense, since they were more or less built in imitation of them. But in other ways they have totally unique structures connecting across vast conceptual distances.

To be clear, it's not as if there is some clutch of images specifically of Loab waiting to be found — they're definitely being created on the fly, and Supercomposite told me there's no indication the digital cryptid is based on any particular artist or work. That's why latent space is latent! These images emerged from a combination of strange and terrible concepts that all happen to occupy the same area in the model's memory, much like how in the Google visualization earlier, languages were clustered based on their similarity.

From what dark corner or unconscious associations sprang Loab, fully formed and coherent? We can't yet trace the path the model took to reach her location; a trained model's latent space is vast and impenetrably complex.

The only way we can reach the spot again is through the magic words, spoken while we step backward through that space with our eyes closed, until we reach the witch's hut that can't be approached by ordinary means. Loab isn't a ghost, but she is an anomaly, yet paradoxically she may be one of an effectively infinite number of anomalies waiting to be summoned from the farthest, unlit reaches of any AI model's latent space.

It may not be supernatural … but sure as hell ain't natural.