Will your driverless car ever choose to kill you?

The science fiction writer Isaac Asimov – the writer behind I, Robot – famously wrote a number of ‘laws’ for robots which governed how they could behave.

The first was, ‘A robot may not injure a human being or, through inaction, allow a human being to come to harm.’

But what about when robots have to decide WHICH humans to kill?

With self-driving cars becoming a reality (Addison Lee has promised to have them on the road by 2021), it’s a pertinent question being given considerable thought.

Should a machine decide to kill its own owner, or multiple other people?

Self-driving cars will, in theory, be much safer than cars driven by people – with ROSPA research showing 95% of accidents involve human error, and in 76% of cases, a human is solely to blame.

But the probability of an accident will never drop to zero – and in some cases, software will have to decide who lives and who dies.

MORE: Couple who named baby after Hitler convicted of neo-Nazi terror group charges

MORE: Briton dies from rabies while in Morocco after being bitten by cat

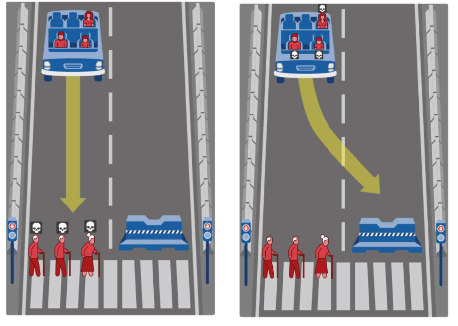

Say, for example, a car’s brakes have failed. If the car stays on course, it will plough into two old people; but swerving to avoid them means it will hit a pram and kills a baby.

Which is the ‘preferred’ course of action?

In 2014, MIT experts came up with a way to work out what people think is the ‘correct’ decision for machines to make – a version of the ‘trolley problem’, a well-known thought experiment about an out-of-control trolley.

MIT experts posed these questions in an online quiz, ‘Moral Machine’ – it went viral, being answered by millions of people in 233 countries, according to MIT Technology Review.

With 40 million answers, it’s become one of the largest studies ever on what is ‘moral’ for a machine to do.

The quiz posed questions over whether it was preferable to kill the young or the old, people who crossed legally versus people who crossed illegally, and their own passengers or pedestrians. It then divided responses into which country they were from; what religion they were; their gender; age etc

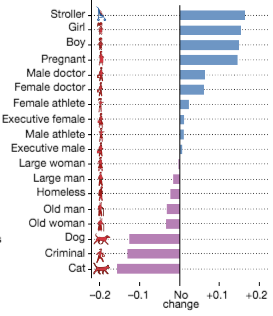

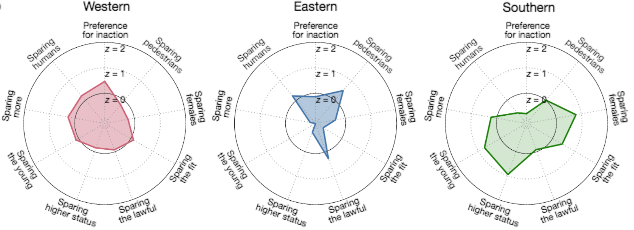

The researchers published their findings in the Nature journal last week, finding that, broadly, in Western countries there was more emphasis on sparing more lives.

In countries such as Japan and China, people were less likely to choose that old people should die, instead of young people (perhaps reflecting the greater respect for the elderly in those countries).

People from poorer countries were also more likely to say that people with higher social status should be spared, the researchers said.

Overall, the research says: ‘The strongest preferences are observed for sparing humans over animals, sparing more lives, and sparing young lives. Accordingly, these three preferences may be considered essential building blocks for machine ethics, or at least essential topics to be considered by policymakers.’

Here are some of the charts that illustrate the findings.

What this means

The findings could impact car design – and laws around how AI ‘drives’, the researchers say.

Paper author Edmond Awad said, In the last two, three years more people have started talking about the ethics of AI.’

Previous MIT research shows that most people agree that a driverless car should sacrifice its passenger to save, say, 10 pedestrians – with 76% agreeing that cars should be programmed in this way.

But when people were asked if they would buy a car programmed like this, most said they would prefer to buy one programmed to protect the passenger.

Dr Iyad Rahwan, from the Massachusetts Institute of Technology (MIT) Media Lab, said: ‘Most people want to live in in a world where cars will minimise casualties, but everybody wants their own car to protect them at all costs.’

But what might change is the whole idea of car ownership.

Experts have suggested that when self-driving cars become more popular, the idea of ‘owning’ a car may change.

So, for instance, a person might summon a self-driving car to work, then summon one on the way home, much like one does an Uber today – saving on parking fees and other expenses of having a car ‘waiting’ 24 hours a day.

If driverless cars are adopted widely, it’s almost certain that the laws around driving will change – and that could include laws governing what their software can (and can’t) do.

So while people might want a car which protects them at all costs – regardless of how many lives are lost – it might end up being impossible to buy one, as no manufacturer would want the legal responsibility.

So the questions posed by the ‘moral machine’ quiz are likely to become real.