Can a robot feel? Researchers create artificial skin with a better sense of touch

The androids in “Blade Runner” sometimes seem more human than humans, but to feel tears in the rain, you need a sense of touch. Now researchers are closing in on just that: robotic skin that feels.

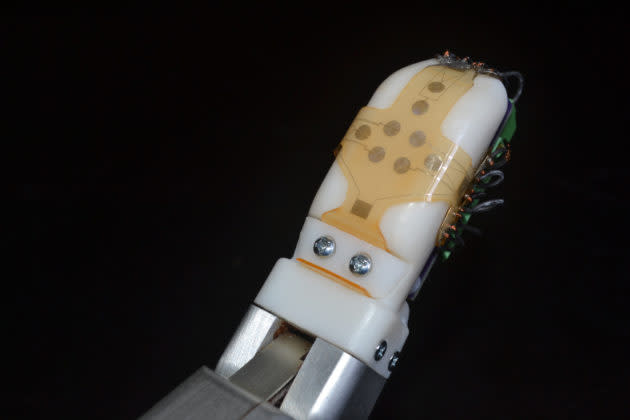

Engineers at the University of Washington and UCLA have developed stretchable, sensor-equipped skin that can be wrapped over robotic fingers, or prosthetic limbs, and provide electrical impulses for tactile feedback.

The aim of the project isn’t to let androids wax poetic, but to help humans handle robotic tools more precisely.

“Robotic and prosthetic hands are really based on visual cues right now — such as, ‘Can I see my hand wrapped around this object?’ or ‘Is it touching this wire?’ But that’s obviously incomplete information,” senior author Jonathan Posner, a UW professor of mechanical engineering and chemical engineering, explained today in a news release.

“If a robot is going to dismantle an improvised explosive device, it needs to know whether its hand is sliding along a wire or pulling on it,” he said. “To hold on to a medical instrument, it needs to know if the object is slipping. This all requires the ability to sense shear force, which no other sensor skin has been able to do well.”

Posner is the senior author of a research paper about the robo-skin project, published in the journal Sensors and Actuators A: Physical.

The robo-skin, manufactured at the Washington Nanofabrication Facility at UW, takes advantage of the same type of silicone rubber that’s used in swimming goggles. The rubbery sheet has tiny serpentine channels running through it, each thinner than a human hair.

Those channels are filled with electrically conductive liquid metal that doesn’t crack or fatigue the way wires do when they’re stretched. When the skin rubs against objects, that action compresses and stretches out the microfluidic channels in ways that affect how electrical impulses pass through the metal.

The impulses can be interpreted to get a sense of the shear forces that are involved when fingers slip and slide against objects.

“It’s really following the cues of human biology,” said lead author Jianzhu Yin, who recently received his doctorate in mechanical engineering from UW and is now an engineer at Lightel, headquartered in Renton, Wash.

“Our electronic skin bulges to one side, just like the human finger does, and the sensors that measure the shear forces are physically located where the nail bed would be, which results in a sensor that performs with similar performance to human fingers,” Yin explained.

The third author of the study, UCLA’s Veronica Santos, said the skin’s structure makes it possible for robotic fingers to sense the shear forces as well as vibrations and the normal pressure of fingers pushing against the object.

“The fact that our latest skin prototype incorporates all three modalities creates many new possibilities for machine learning-based approaches for advancing robot capabilities,” Santos said.

Experiments have shown that the skin has a high level of sensitivity for light touch applications such as opening doors, shaking hands, picking up packages and handling a phone.

The sensors can detect tiny vibrations at 800 times per second, which the researchers say outdoes the performance of human fingers. Just don’t tell the replicants.

More from GeekWire:

Brain implant provides sense of touch with robotic hand – and that’s just the start

Electrodes on the brain could return sense of ‘touch feedback’ to patients with spinal cord injuries

UW team creates robotic hand that learns to become more dexterous than yours

This robot surgeon does a superhuman job on sutures – but there’s a catch