Facebook while black: Users call it getting 'Zucked,' say talking about racism is censored as hate speech

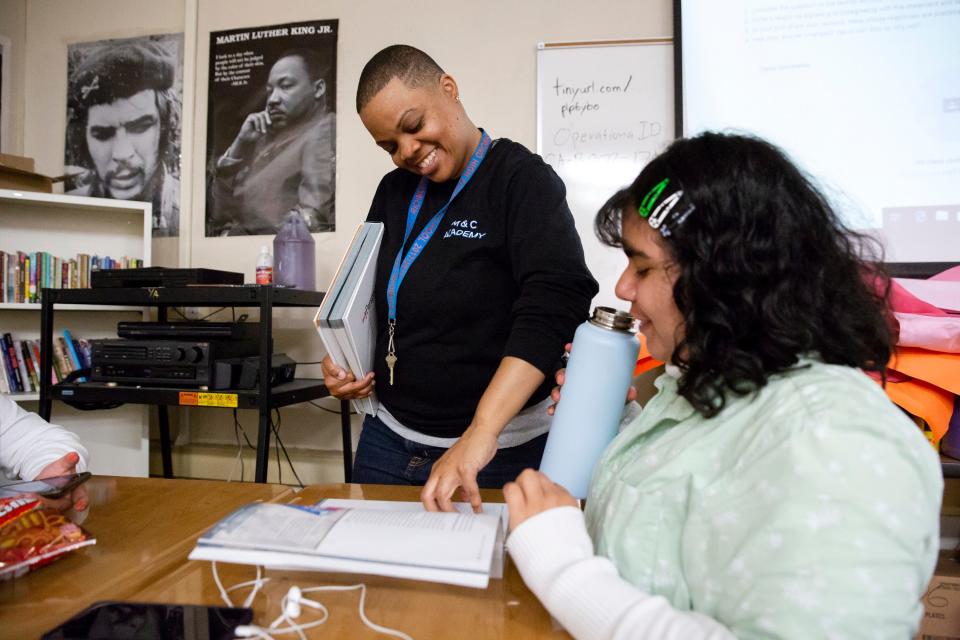

It was spirit week, and Carolyn Wysinger, a high school teacher in Richmond, California, was cheerfully scrolling through Facebook on a break between classes. Her classroom, with its black-and-white images of Martin Luther King Jr. and Che Guevara and a "Resist Patriarchy" sign, was piled high with colorful rolls of poster paper, the whiteboard covered with plans for pep rallies.

A post from poet Shawn William caught her eye. "On the day that Trayvon would've turned 24, Liam Neeson is going on national talk shows trying to convince the world that he is not a racist." While promoting a revenge movie, the Hollywood actor confessed that decades earlier, after a female friend told him she'd been raped by a black man she could not identify, he'd roamed the streets hunting for black men to harm.

For Wysinger, an activist whose podcast The C-Dubb Show frequently explores anti-black racism, the troubling episode recalled the nation's dark history of lynching, when charges of sexual violence against a white woman were used to justify mob murders of black men.

"White men are so fragile," she fired off, sharing William's post with her friends, "and the mere presence of a black person challenges every single thing in them."

It took just 15 minutes for Facebook to delete her post for violating its community standards for hate speech. And she was warned if she posted it again, she'd be banned for 72 hours.

Wysinger glared at her phone, but wasn't surprised. She says black people can't talk about racism on Facebook without risking having their posts removed and being locked out of their accounts in a punishment commonly referred to as "Facebook jail." For Wysinger, the Neeson post was just another example of Facebook arbitrarily deciding that talking about racism is racist.

"It is exhausting," she says, "and it drains you emotionally."

Black activists say hate speech policies and content moderation systems formulated by a company built by and dominated by white men fail the very people Facebook claims it's trying to protect. Not only are the voices of marginalized groups disproportionately stifled, Facebook rarely takes action on repeated reports of racial slurs, violent threats and harassment campaigns targeting black users, they say.

Many of these users now think twice before posting updates on Facebook or they limit how widely their posts are shared. Yet few can afford to leave the single-largest and most powerful social media platform for sharing information and creating community.

So to avoid being flagged, they use digital slang such as "wypipo," emojis or hashtags to elude Facebook's computer algorithms and content moderators. They operate under aliases and maintain back-up accounts to avoid losing content and access to their community. And they've developed a buddy system to alert friends and followers when a fellow black activist has been sent to Facebook jail, sharing the news of the suspension and the posts that put them there.

They call it getting "Zucked" and black activists say these bans have serious repercussions, not just cutting people off from their friends and family for hours, days or weeks at a time, but often from the Facebook pages they operate for their small businesses and nonprofits.

A couple of weeks ago, Black Lives Matter organizer Tanya Faison had one of her posts removed as hate speech. "Dear white people," she wrote in the post, "it is not my job to educate you or to donate my emotional labor to make sure you are informed. If you take advantage of that time and labor, you will definitely get the elbow when I see you." After being alerted by USA TODAY, Facebook apologized to Faison and reversed its decision.

Even former employees are not immune. In November, former Facebook partnerships manager Mark Luckie called out Facebook for how it treats black users and black employees. "One of the platform's most engaged demographics and an unmatched cultural trendsetter is having their community divided by the actions and inaction of the company," he wrote in a Facebook post. "This loss is a direct reflection of the staffing and treatment of many of its black employees."

Facebook deleted his post, then hours later said it “took another look” and restored it.

'Black people are punished on Facebook'

"Black people are punished on Facebook for speaking directly to the racism we have experienced," says Seattle black anti-racism consultant and conceptual artist Natasha Marin.

Marin says she's one of Facebook's biggest fans. She created a "reparations" fund that's aided a quarter million people with small donations to get elderly folks transportation to medical appointments or to pay for prescriptions, to help single moms afford groceries or the rent or to get supplies for struggling new parents. More recently, she started a social media project spreading "black joy" rather than black trauma.

She was also banned by Facebook for three days for posting a screenshot of a racist message she received.

"For me as a black woman, this platform has allowed me to say and do things I wouldn’t otherwise be able to do," she says. "Facebook is also a place that has allowed things like death threats against me and my children. And Facebook is responsible for the fact that I am completely desensitized to the N-word.”

Seven out of 10 black U.S. adults use Facebook and 43 percent use Instagram, according to the Pew Research Center. And black millennials are even more engaged on social media. More than half – 55 percent – of black millennials spend at least one hour a day on social media, 6 percent higher than all millennials, while 29 percent say they spend at least three hours a day, nine percent higher than millennials, Nielsen surveys found.

The rise of #BlackLivesMatter and other hashtag movements show how vital social media platforms have become for civil rights activists. About half of black users turn to social media to express their political views or to get involved in issues that are important to them, according to the Pew Research Center.

These hashtag movements, coming against the backdrop of an upsurge in hate crimes, have helped put the deaths of unarmed African Americans by police officers on the public agenda, along with racial disparities in employment, health and other key areas.

"If I were to sit down with Mark Zuckerberg, the message I would want to get across to him is: You may not even realize how powerful a thing you have created. Entire revolutions could take place on this platform. Global change could happen. But that can’t happen if real people can’t take part," Marin says. "The challenge for these companies is to see black women as valuable resources. This is the wealth on the platform, the people pushing the platform forward. If anything, they should be supported. "There should be policies and community standards that overtly support that kind of work," Marin says. "Maybe Mark Zuckerberg needs to sit down with a bunch of black women who use Facebook and just listen."

How Facebook judges what speech is hateful

For years, Facebook was widely celebrated as a platform that empowered people to bypass mainstream media or oppressive governments to directly tell their story. Now, in the eyes of some, it has assumed the role of censor.

With more than a quarter of the world's population on Facebook, the social media giant says it's wrestling with its unprecedented power to judge what speech is hateful.

All across the political spectrum, from the far right to the far left, Facebook gets flak for its judgment calls. To help sort what's allowed and what's not, it relies on a 40-page list of rules called "Community Standards," which were made public for the first time last year. Facebook defines hate speech as an attack against a "protected characteristic," such as race, gender, sexuality or religion. And each individual or group is treated equally. The rules are enforced by a combination of algorithms and human moderators trained to scrub hate speech from Facebook. From July to September 2018, Facebook removed 2.9 million pieces of content that it said violated its hate speech rules, more than half of which was flagged by its technology.

The tag team of algorithms and moderators frequently makes mistakes when flagging and removing content, Facebook acknowledges. And it has taken steps to try to make its system more accountable. Last year, Facebook began allowing users to file an appeal when their individual posts are removed. This year, the company plans to introduce an independent body of experts to review some of those appeals.

There are just too many sensitive decisions for Facebook to make them all on its own, Zuckerberg said last month. A string of violent attacks, including a mass shooting at two mosques in New Zealand, recently forced Facebook to reckon with the scourge of white nationalist content on its platform. "Lawmakers often tell me we have too much power over speech," Zuckerberg wrote, "and frankly I agree."

In late 2017 and early 2018, Facebook explored whether certain groups should be afforded more protection than others. For now, the company has decided to maintain its policy of protecting all racial and ethnic groups equally, even if they do not face oppression or marginalization, says Neil Potts, public policy director at Facebook. Applying more "nuanced" rules to the daily tidal wave of content rushing through Facebook and its other apps would be very challenging, he says.

Potts acknowledges that Facebook doesn't always read the room correctly, confusing advocacy and commentary on racism and white complicity in anti-blackness with attacks on a protected group of people. Facebook is looking into ways to identify when oppressed or marginalized users are "speaking to power," Potts says. And it's conducting ongoing research into the experiences of the black community on its platform.

"That's, on its face, the type of speech we want to encourage, but words and people aren't perfect, so it doesn't always come across as that. We are exploring additional refinements to our hate speech policy that will perhaps help remedy some of these situations," he says.

Facebook wants to make sure its policies "reflect how people speak about these topics." "That's the biggest thing," Potts says, "making sure we are in tune with this community and the way they actually speak about these topics, and making sure our policies are in line and in touch."

'Just another slap in the face'

Ayo Henry, a mother of four from Providence, Rhode Island, says Facebook's policies could not be more out of touch.

Last year, Henry was cut off by a kid on a bike wearing a confederate sweatshirt when she pulled into the parking lot of a sandwich shop. She honked her horn. He responded twice with a racial slur.

She restrained herself in front of her children, but a few weeks later after leaving roller derby practice, Henry spotted the kid again, wearing the same sweatshirt. The boy tried to pedal away. She pulled out her phone. "I don't know what came over me. It was an impulsive decision," she says. "But I wanted to let him know that it wasn't OK."

He apologized, explaining he "wasn't in a good mood that day." She realized how young he was as his body trembled and hands shook. She tried to offer him some motherly advice on why he should not use racial slurs.

Henry's video of the exchange was viewed more than 2 million times on Facebook. Within 48 hours, Facebook took the footage down, saying it ran afoul of its hate speech rules. Henry appealed the decision but Facebook refused to reverse it.

In the meantime, her Messenger inbox filled with hundreds racial slurs, derogatory messages and threats that she would be raped or killed. Yet each time Henry tried to privately share the video with her friends on Messenger, Facebook blocked her.

Offers of support that poured in from around the country helped Henry develop a network of black activists. Starting last summer, she says they all began noticing that just typing the phrase "white people" into a Facebook post could get their post flagged and their accounts suspended. Henry says Facebook has suspended her several times, once for calling on white women to get on board with a broader, more intersectional, form of feminism.

It wasn't a Facebook post, but a comment on that post that triggered her longest suspension from Facebook.

Her Facebook post drew attention to an antique store in nearby Massachusetts that refused to remove racist memorabilia hanging on its walls, including a vintage advertisement for smoking tobacco with a caricature of two black men. "Still perpetuating white supremacy through 'nostalgia," she wrote.

One person challenged whether the image was in fact racist so Henry replied with a similar image of a jigsaw puzzle box from the same era which was labeled "Chopped Up N------."

That comment, which Facebook deleted, sent her to Facebook jail for a month. Henry appealed the suspension but Facebook would not relent. She says she knows of no one in the black community who has had a post reinstated by appealing.

"Black people in this country, and people of color in general, we endure a daily battle just to exist. Now we have to be careful not to complain too much about the various types of oppression publicly because, if you do, then you are going to be suppressed," she says. "It's difficult to navigate the framework of social media as a black person, just because racism is systemic and, when you realize it's ingrained so deeply in a system that is so influential on our country, then it's almost like a whole second burden to bear."

"Social media is supposed to be a way that people can come together and be able to communicate relatively freely," she says. "For us, it has become just another slap in the face."

Civil rights groups push for audit, accountability

Early on, the Black Lives Matter social justice movement turned to Facebook as an organizing tool. Yet their organizers say they were soon set upon by bands of white supremacists who targeted them with racial slurs and violent threats. In 2015, Color Of Change, which was formed after Hurricane Katrina to organize racial justice campaigns on the internet, began pressuring Facebook to stop the harassment of black activists by hate groups.

Chanelle Helm, a Black Lives Matter organizer from Louisville, Kentucky, says the threats intensified in the form of doxxing – posting organizers' addresses, phone numbers and photos on the internet. Faison, founding member of the Sacramento chapter of Black Lives Matter, was stalked. "It got a lot more serious," Helm says. "They were threatening folks with doxxing of family members."

Facebook removed a group responsible for some of the harassment, but Color Of Change and other civil rights groups say they struggled to get the company to address other complaints. Late last year, The New York Times reported that Facebook had hired a Republican opposition research firm to discredit Color Of Change and other Facebook critics.

"What we continue to see time and time again is what's framed as race-neutral decision-making ends up being overtly hostile to the communities most in need of some of those free speech protections," says Brandi Collins-Dexter, senior campaign director at Color Of Change.

The Center for Media Justice began probing why content from people of color was being removed from Facebook in August 2016 when, at the request of law enforcement, Facebook shut down the video of a Baltimore woman, Korryn Gaines, who was live-streaming her standoff with police. Gaines was later shot and killed by a police officer in front of her 5-year-old son who was also struck twice by gunfire. At the same time, Black Lives Matter activists and Standing Rock pipeline protesters in North Dakota were reporting that their content was being removed, too.

In 2016 and again in 2017, civil rights and other groups wrote letters urging Facebook to conduct an independent civil rights audit of its content moderation system and to create a task force to institute the recommendations.

Last May, Facebook agreed to an audit as it was trying to control the damage from revelations that a shadowy Russian organization posing as Americans had targeted unsuspecting users with divisive political messages to sow discord surrounding the 2016 presidential election. One of the main targets of the Internet Research Agency on Facebook were African Americans. The same day Facebook gave in to demands from civil rights groups, it announced a second audit into allegations of anti-conservative bias led by former Senator Jon Kyl, an Arizona Republican.

There are few signs of progress in how Facebook deals with racially motivated hate speech against the African American community or the erasure of black users' speech, says Steven Renderos, senior campaign manager at the Center for Media Justice.

Last summer, after Nia Wilson, a black teenage girl, was stabbed to death by a white man wielding a knife at an Oakland, California, train station, black women gathered on Instagram to mourn.

“As we see another one of us being murdered to bleed out in the streets we can’t help by think: that could be me, that could be my daughter, a sister, my best friend,” black activist Rachel Cargle wrote. “You okay sis? I get it if you’re not. At this moment I feel heavy and distant and numb. I feel angry and deflated and heartbroken.”

Cargle asked that only women of color respond. "I needed to give us this space to check in on each other." Comments from black women poured in. “I’m scared. For my family, for my friends, for myself and for all the other black women out there,” one wrote.

Some white women objected to being left out of the conversation. Soon Instagram removed Cargle’s post, saying it violated guidelines on hate speech.

"There were hundreds of comments of black women being seen and heard by their peers, being loved and cared for by their sisters, being consoled and loved exactly as they needed it," Cargle wrote at the time. "DO YOU SEE THIS? DO YOU SEE HOW NOT ONLY ARE WE KILLED IN THE STREETS WE ARE ALSO PUNISHED FOR GRIEVING."

Instagram later reversed the decision.

Civil rights organizations say they've largely given up on Facebook voluntarily taking steps to protect black users, calling instead on Congress and the Federal Trade Commission to regulate the company. Color Of Change has asked Zuckerberg and COO Sheryl Sandberg to take part in a civil rights summit this spring, but they have not agreed to take part. Color Of Change is also pushing a resolution at Facebook’s shareholder meeting in May to replace Zuckerberg as chairman of the board.

"At the end of the day, Facebook hasn't tackled one of the biggest issues of most interest to the civil rights community, which is how it deals with content moderation and how the platform will become a place that civil rights are protected," Renderos says. "We, and a lot of organizations that we work with, are frankly tired of waiting for Facebook to decide what changes it's going to make for itself."

'I don't think Facebook cares'

Shaun Saunders, who works in public relations, says he doesn't think Facebook cares. Saunders is someone tech companies seek out when they want to tell their story to the media. He uses Facebook to connect with journalists. He's also been suspended from Facebook three times for speaking his mind about racism.

"I am exhausted by the notion that a platform can arbitrarily cut you off. I am not only cut off from my family and friends, I am cut off from my job and my craft. That's the part that really gets me," Saunders says. "It's not OK that black people are dying. Black people should not shut their mouths for identifying these things that are always happening."

"I would love to ask Mark Zuckerberg: 'What the hell are you guys doing? You guys are a hot mess.' I would say it to him just like that."

It's not just black people who have their posts removed. Andy Marra, executive director of the Transgender Legal Defense & Education Fund, says allies of black people run into trouble, too.

Marra's Facebook post in late January calling on Asian Americans to protect "black and brown who face the brunt of white supremacy" was removed by Facebook.

Twice Marra appealed the decision to take down her Facebook post, which shared an article from a popular blog showing an Asian man throwing up "white power" signs to antagonize Black Lives Matter protesters. "This post is expressing condemnation to anti-black racism. The post also articulates critical feedback about how other people of color – specifically those in the Asian community, including myself as an Asian person – should oppose racism in all of its forms," she wrote in one appeal that Facebook denied.

It was only when friends reached out to Facebook to plead her case that Marra's Facebook post was reinstated. Critics say having those kinds of connections is the only way that Facebook corrects content moderation errors, but it's not a channel available to just anyone seeking redress.

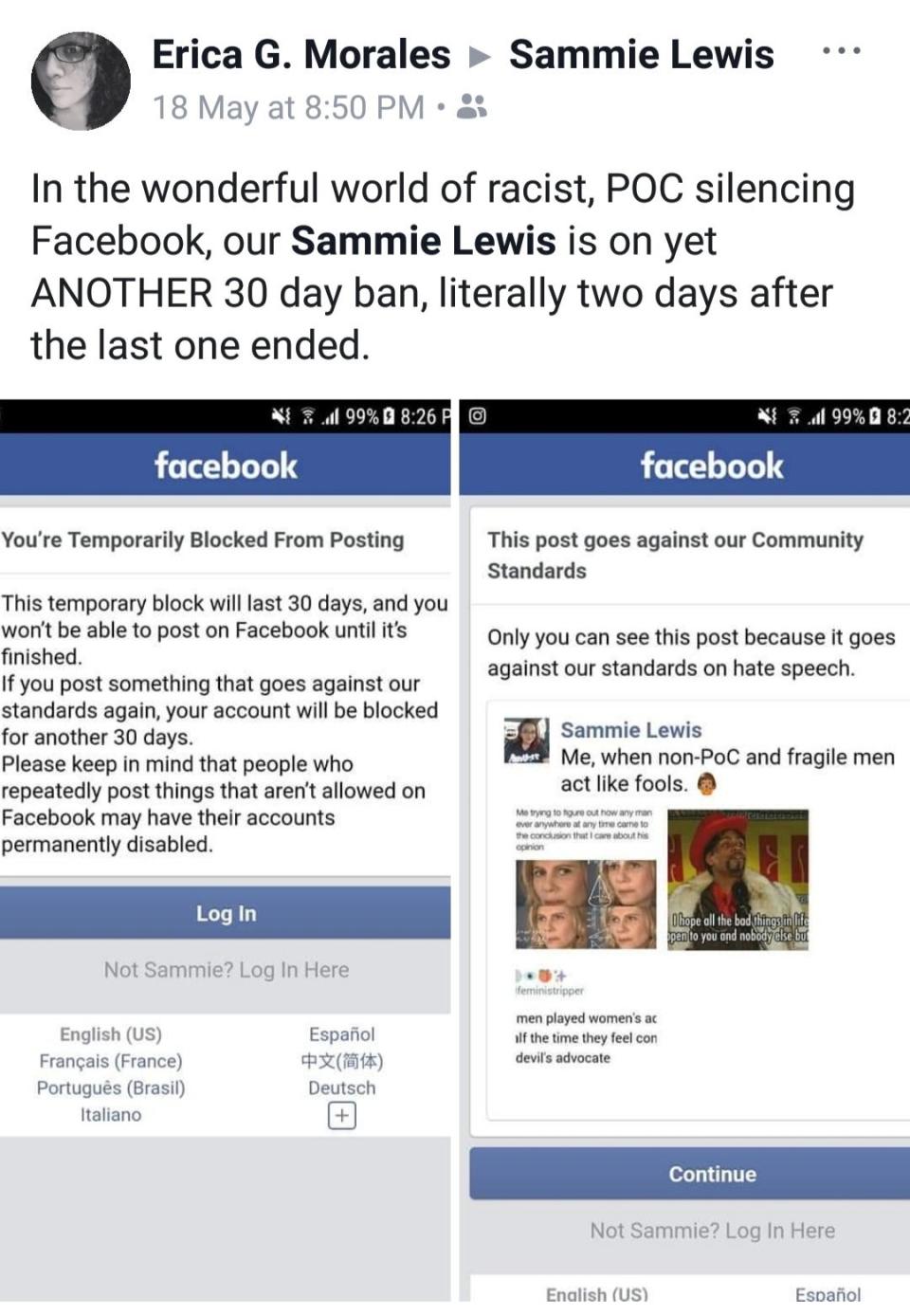

Take Samreen "Sammie" Lewis and Erica Morales, two activists of color, who say their Facebook page, Three Token Brown Girls, has been deleted three times, once just for the logo.

They say they each have been personally banned repeatedly, sometimes barely serving out one suspension before being hit with another. Lewis estimates she's been suspended from Facebook for half of the past year. Their protests go unheard, and, each time they have to rebuild their Facebook page from scratch, they lose followers.

"It basically says that we don't matter. The harm being caused to us perpetually, constantly is nothing to (Facebook)," Lewis says. "They'd much rather shut us up for their own comfort than acknowledge the harm they are causing."

In 2017, DiDi Delgado, a poet and black liberation organizer and activist, captured the growing anger in the black community with a Medium post provocatively titled: "Mark Zuckerberg Hates Black People." At the time, Delgado was simultaneously serving two Facebook bans for alleged hate speech.

"That means bigoted trolls lurked my page reporting anything and everything, hoping I’d be in violation of the vague 'standards' imposed by Facebook," Delgado wrote. "It’s kinda like how white people reflexively call the cops whenever they see a Black person outside. Except in this case it’s not my physical presence they find threatening, it’s my digital one."

Asked what has changed since she published the viral post, Delgado says nothing. "Black, LGBT, non-male and women identified users are still disproportionately banned for speaking out against oppression," she says.

These days, Delgado spends less time and energy on Facebook and, at times, refrains from speaking her mind there. "Sometimes it’s more important to keep that direct line of communication open than to risk getting banned with a public post," she says.

In the end, Wysinger made that same calculation. In February, Wysinger decided not to risk being booted off Facebook by republishing her post about Neeson, the actor. Just days before her 40th birthday, she did not want to get thrown in Facebook jail and miss the chance to celebrate with family and friends. But, she says, she wants Facebook to know that, in silencing black people, the company is causing them harm.

"Facebook is not looking to protect me or any other person of color or any other marginalized citizen who are being attacked by hate speech," she says. "We get trolls all the time. People who troll your page and say hateful things. But nobody is looking to protect us from it. They are just looking to protect their bottom line."

"Anything that I share, I'm sharing because it's something personal that happened to me. That's what Facebook has always built its platform on," she says. "It used to ask: How are you feeling? Well, today, I am feeling targeted by CIS hetero white men."

Read more: USA TODAY coverage of inclusion, diversity and equity in tech

More on Facebook and race: Facebook takes down ads mentioning African-Americans and Hispanics, calling them political

Russians targeted Hispanics on Facebook: Russian Facebook ads inflamed Hispanic tensions over immigration after Trump election

This article originally appeared on USA TODAY: Facebook racism? Black users say racism convos blocked as hate speech