Here's How Facebook Could Be Regulated

WASHINGTON ― Mark Zuckerberg’s original motto for Facebook was “Move fast and break things.” It now appears that the CEO is going to have to answer for moving too fast and breaking too many things.

After years of trying to avoid oversight from Washington, the 2-billion-person social network platform is set for a reckoning. This past week, Facebook faced its first major congressional oversight hearings since it revealed that a Russian “troll factory,” called the Internet Research Agency, had purchased ads on the site in order to influence the 2016 election.

In three committee hearings, representatives from Facebook, Google and Twitter were grilled about their sites’ roles in facilitating the foreign influence operation. Lawmakers from both parties poked at the companies’ failure to reckon with questions about the lack of transparency in online advertising and the vast power they hold over our lives.

Sen. John Kennedy (R-La.) took particular umbrage with Facebook: “Your power sometimes scares me.”

Kennedy got Facebook general counsel Colin Stretch to essentially admit that it was impossible for the company to keep track of the 5 million monthly advertisers on its platform. Stretch also ducked and dodged questions about how much user information his company had on specific individuals and whether it could compromise their privacy in search of advertising dollars.

Sen. James Lankford (R-Okla.) told Stretch that he preferred a light regulatory touch on industry and then added that dealing with Facebook was “something we hope we don’t have to engage in legislatively.”

“You are going to have to do something about this or else we will,” Sen. Dianne Feinstein (D-Calif.) said bluntly.

The hearings may have put the fear of God in the tech companies. Ever since the boom of online commercial activity in the 1990s, Silicon Valley has fought to avoid any kind of regulation, taxation or oversight from government, state, local or federal. Facebook is currently trying to fight off any federal oversight. The company has hired two crisis PR firms and registered two new lobbying firms this year. Chief Operating Officer Sheryl Sandberg appeared at official events in Washington ahead of the hearings and gave an exclusive interview to Axios’ Mike Allen.

But what would regulation for Facebook even look like? We asked the experts.

Time to investigate

Determining how to regulate Facebook may first require some kind of definition of what it is.

Reporter Max Read posed this very question in New York Magazine earlier this month. What do you call a website that allows you to share baby pictures with former high school classmates and also receive targeted advertising with the most fine-grained precision possible? Facebook brags about connecting us to our family and friends — and also about directly influencing the outcomes of elections across the globe. It sits on top of industries including journalism, where it, together with Google, essentially controls the distribution channels for online news and, in effect, the way people discover information about politics, government and society.

Facebook, Read argues, is like “a four-dimensional object” that “we catch slices of” “when it passes through the three-dimensional world we recognize.” It’s really hard to get a handle on it.

Listen to Paul Blumenthal talk about Facebook on HuffPost’s So That Happened podcast at the 16:00 minute mark below.

The best way to figure out exactly what Facebook is and does is to launch a public investigation, argues Matt Stoller, a fellow at the Open Markets Institute, a think tank that supports tougher antitrust enforcement.

“We need a select congressional committee to just look at Facebook and figure out all the things it does,” Stoller said.

Such a committee could examine Facebook’s ownership of multiple social networks (it owns Instagram and tried to purchase Snapchat). An investigation could look at the company’s use of virtual private networks (VPNs) to gauge what apps are popular and identify good takeover targets. Or it could check Facebook’s massive advertising business and how it is used to influence elections or to target retail ads at users immediately after they look at a product on another site. A committee could determine how such a large corporate enterprise works and examine how this affects populations, suss out anti-competitive behavior, examine and remedy invasions of people’s privacy, and find out if it is undermining human autonomy.

What about legal changes?

While activists like Stoller push for a dedicated committee to investigate Facebook, the federal bureaucracy and some members of Congress are working on fixing the problem of untraceable political advertising.

Sens. Mark Warner (D-Va.) and Amy Klobuchar (D-Minn.) are drafting legislation to require large online platforms like Facebook, YouTube (owned by Google), Instagram and Snapchat to maintain public databases of all political advertising, including ad buyer and targeting information. The Federal Election Commission reopened its consideration of disclosure requirements for online election advertising and is currently seeking public comment.

Stoller proposes that Facebook go further by informing each user who was specifically targeted by ads purchased by the Internet Research Agency.

Facebook’s Stretch told the Senate Intelligence Committee that the “technical challenges” of determining which users were exposed to the propaganda and then contacting them “are substantial.”

But Warner called the idea of reaching out to affected users an “interesting question about what obligation you might have.” He compared it to legal requirements that medical facilities inform patients if they may have been exposed to a disease.

Yochai Benkler, the Berkman professor of entrepreneurial legal studies at Harvard Law School, has suggested another change: Lawmakers could pass a bill to require social networks to identify bots (automated accounts) and “sockpuppets” (fake accounts run by real people) so as to detail their role in spreading political advocacy advertising. No legislation has been discussed yet to tackle the problem of social media bots spreading paid propaganda.

Aside from the political advertising issue, Stoller believes that Facebook’s dominant position in social media ― the company is often referred to as a monopoly ― could be remedied by forcing it to become interoperable with other social sites. He likened this to a 2001 Federal Communications Commission edict requiring any new video-chatting service provided through AOL Instant Messenger to be interoperable with its competitor’s video-chat products.

What about privacy?

To privacy groups, the issue of political advertising is really just a subcategory of problems with large online platforms they have complained about for years. Companies like Facebook and Google derive most of their revenue from advertising. They essentially constitute a duopoly because they have access to the best data about individuals. Every memory, picture, emoji, song, video, link, gripe, fear, hope, want, dream and bad political opinion posted is mined and monetized as data.

The more precise the data, the better advertisers and marketers can target a segmented audience, like “Jew haters” on Facebook or people who search Google for terms like “black people ruin everything.” (Those terms have since been disabled for ad targeting.) By having the largest data set, Facebook and Google can target ads more precisely than any other online providers.

“At the end of the day, how [Facebook and Google] conduct their businesses undermines privacy and raises questions about ethical behavior in the uses of our information and their role in society,” Jeffrey Chester, executive director for the Center for Digital Democracy, a pro-privacy nonprofit, told HuffPost.

Both Chester and Stoller pointed to Europe’s soon-to-be implemented online privacy rules, known as the General Data Protection Regulation (GDPR), as a way to control privacy abuses and allow users to maintain control over their online lives in the United States. The GDPR will provide individuals with control over their online information by making it accessible and removable at an individual’s request. The new regulations will also give an individual the right to refuse to let their data be harvested and used by sites like Google and Facebook or third-party data brokers that collect the information and resell it to ad networks.

Inside the filter bubble

Complaints about Facebook’s influence on society extend beyond the way advertising is targeted or the way users sign away their privacy. The distribution of false news designed to inflame users’ opinions also has activists worried.

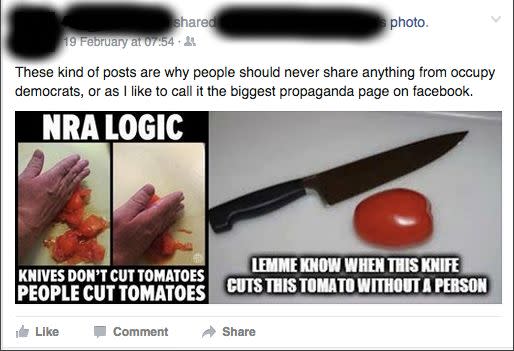

In 2011, progressive activist Eli Pariser wrote The Filter Bubble, which warned that social media algorithms were creating and reinforcing divided and insular online communities that did not interact with people or information with which they disagreed. A number of websites — particularly, but not exclusively, those skewed to the right — have figured out how to take advantage of this dynamic to distribute false information about political candidates and hot-button political issues in order to drive up traffic and advertising revenue.

If you were caught in one of these fake-news Facebook bubbles, you might have thought that Pope Francis endorsed Donald Trump, George Soros pays protesters to appear at Trump rallies, Hillary Clinton sold weapons to Islamic State terrorists, the Chobani yogurt chain was importing Muslims to rape Americans, or Clinton and her campaign chairman John Podesta ran a child sex ring out of a pizza shop in Washington, D.C. That last claim led a North Carolina man to fire shots inside the crowded pizzeria in December 2016 in an attempt to rescue the non-existent sex slaves.

But Facebook isn’t legally responsible for the fake news that appears on its site. The Communications Decency Act of 1996 contains a section that states, “No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.” This section is a fundamental legal building block of most of the online products that people use. Google cannot be sued for showing false or libelous content to people who search for it. The same goes for what people post and share on Facebook.

This piece of law is also “the existential problem” for large online platforms as it provides too much protection for what gets said on them, according to Jonathan Taplin, a critic of Silicon Valley and author of Move Fast and Break Things: How Facebook, Google, and Amazon Cornered Culture and Undermined Democracy.

Publishers have actual legal responsibility for what they allow onto their sites. The New York Times can be sued based on what it publishes, Taplin noted. Facebook, however, cannot.

“They are given protections that no one can sue them for any reason — that is Google and Facebook — that’s unlike the protection that your publication has or NBC News has or The New York Times has,” Taplin told HuffPost. “They are completely shielded from any responsibility for the content that appears on their service.”

Love HuffPost? Become a founding member of HuffPost Plus today.

Changes to this legal protection (which has been interpreted by judges to provide safe harbor for online platforms even when they pay to distribute others’ content and decline the option to impose editorial oversight) would likely be devastating to online platforms like Google and Facebook and would transform the way people interact across the entire internet. Without this protection, sites like this one could be held responsible for libelous comments posted by readers, Google could lose lawsuits over potentially false or defamatory information surfacing in search results, and Facebook could be sued for any potentially libelous comment made by anyone on its platform against any other person. The legal bills to defend against libel and defamation claims would be enormous.

Zuckerberg’s plan

Zuckerberg is already working to get ahead of any federal regulation. He announced in September that Facebook would create its own database of advertising on its site to create transparency into who is buying what. Critics of Facebook in and outside Congress said this was a positive move, but warned that the public cannot rely on the company’s self-regulation.

And self-regulation can raise more questions than it answers. Facebook’s new system for labeling fake news helped to reduce sharing of those links so labeled by 80 percent, according to a BuzzFeed report. But what qualifies as fake news? This program could be used and certainly will be interpreted as a way to suppress disfavored or fringe ideas.

“Facebook is too immunized from competition to be left to adopt self-regulation,” Benkler, the Harvard law professor, said. “There is certainly room for some self-regulation in response to public pressure, but basically these companies are monopolies or near-monopolies in their areas of operation, and the only way to achieve desirable outcomes is through clear, effective regulation.”

When asked to comment on possible regulation targeting the company, Facebook spokesman Andy Stone told HuffPost, “We are open to working with legislators and reviewing any reasonable legislative proposals.”

Congress certainly isn’t done with Facebook or the other tech giants. Lawmakers sent Facebook’s representative home with a list of questions to answer. They expressed some irritation that Zuckerberg and the CEOs of the two other companies had sent their lawyers to testify before the committees.

“I wish we had the executives of your three companies here with us today,” Sen. Chris Coons (D-Del.) said.

On Facebook’s quarterly earnings call, which was held immediately after the conclusion of the final congressional hearing, Zuckerberg declared that the usual indicators of corporate success don’t matter “if our services are used in ways that don’t bring people closer together.”

“We’re serious about preventing abuse on our platforms,” he said. “We’re investing so much in security that it will impact our profitability. Protecting our community is more important than maximizing our profits.”

Also on HuffPost

It Can Mess With Your Sleep

The result is an exhausting feedback loop that could leave you fried.

It Can Make You Depressed

“We found that if Facebook users experience envy of the activities and lifestyles of their friends on Facebook, they are much more likely to report feelings of depression,” study co-author Dr. Margaret Duffy, a University of Missouri journalism professor, said in a press release.

But, simply being aware that people are presenting their best selves -- and not necessarily their real selves -- on social media could help you feel less envious.

It Can Drain Your Smartphone Battery

Here's how to do it.

It Can Sap Your Focus

It Can Ruin Your Relationship

It Can Make You Socially Awkward

What's more, most of your Facebook friends don't really care that much about you.

It Can Be A Huge Waste Of Time

“It appears that, compared to browsing the Internet, Facebook is judged as less meaningful, less useful, and more of a waste of time, which then leads to a decrease in mood,” Christina Sagioglou and Tobias Greitemeyer, behavioral scientists at the University of Innsbruck in Austria, wrote in a paper published in 2014.

Facebook doesn't always make us feel crummy. But, if it does, it's time to do something else.

It Can Create An Echo Chamber

But Facebook disagrees, saying last year that it was not responsible for creating echo chambers. Either way, Facebook still plays a big role in how people consume information online.

It Tracks (And Shapes) Your Behavior

Eventually, it may get better at understanding people's preferences -- so much better that some experts fear how precisely future marketing and political campaigns will be able to target people. We might even come to "question whether we still have free will," Illah Nourbakhsh, a robotics expert at Carnegie Mellon University, told HuffPost in an interview.

It Knows When You Go To Bed At Night

Though Facebook asked him to take down this tool, the stunt pointed to a larger issue of data privacy: We all reveal a huge amount of personal information online, and we can't always control how others use it.

This article originally appeared on HuffPost.